Bash script to determine if I have a routable IP address

At my home I have a dynamic IP address which changes at least daily. I have an arrangement with my ISP to always have a routable IP. However, sometimes they mess up and give me a non-routable IP. I would like to be able to detect this from within a shell script so that the shell script can notify me and take other action.

Here's what I'm doing now: I have a remote Raspberry Pi set up with a reverse SSH tunnel to my iMac at home. I can easily detect whether that tunnel is up or not. If it is not up, then it is likely, but not 100% sure, that the cause is a non-routable IP at home. The router at home is a Deco X20.

Is there a better way to determine whether or not my home IP address is routable or not?

https://redd.it/1slznt2

@r_bash

At my home I have a dynamic IP address which changes at least daily. I have an arrangement with my ISP to always have a routable IP. However, sometimes they mess up and give me a non-routable IP. I would like to be able to detect this from within a shell script so that the shell script can notify me and take other action.

Here's what I'm doing now: I have a remote Raspberry Pi set up with a reverse SSH tunnel to my iMac at home. I can easily detect whether that tunnel is up or not. If it is not up, then it is likely, but not 100% sure, that the cause is a non-routable IP at home. The router at home is a Deco X20.

Is there a better way to determine whether or not my home IP address is routable or not?

https://redd.it/1slznt2

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community

Bash scripts to set up your Yubikey to work with GitHub (OpenGPG, SSH)

https://github.com/andrinoff/yubikey-github

A pretty straightforward guide, as well as 2 automatic scripts, that you can run to set it up for you (could be buggy)

Look forward to contributions!

https://redd.it/1snevss

@r_bash

https://github.com/andrinoff/yubikey-github

A pretty straightforward guide, as well as 2 automatic scripts, that you can run to set it up for you (could be buggy)

Look forward to contributions!

https://redd.it/1snevss

@r_bash

GitHub

GitHub - andrinoff/yubikey-github: Guide for Yubikey usage with GitHub (SSH & OpenGPG)

Guide for Yubikey usage with GitHub (SSH & OpenGPG) - andrinoff/yubikey-github

Persistent Sidebar Pane within TMUX that tracks your AI Agent Sessions

https://redd.it/1snrhxc

@r_bash

https://redd.it/1snrhxc

@r_bash

slate — one command to theme your terminal + prompt + CLI tools in sync (Rust, macOS/Linux)

Built this over the last few months, tagged 0.1.1. One command sets up a coordinated palette across your shell prompt (via Starship), bat, delta, eza, lazygit, fastfetch, tmux, and your terminal emulator (Ghostty/Kitty/Alacritty).

For bash specifically, slate adds a single marker-block to your \~/.bashrc(or \~/.bash_profile on macOS) that sources a managed env file. `slate clean` removes that block cleanly — no orphaned exports, no leftover state.

`cargo install slate-cli` or `brew install MoonMao42/homebrew-tap/slate-cli`

Repo: https://github.com/MoonMao42/slate

Happy to hear feedback on the shell integration — especially the marker-block approach vs alternatives like a standalone rc snippet file.

https://redd.it/1so42dt

@r_bash

Built this over the last few months, tagged 0.1.1. One command sets up a coordinated palette across your shell prompt (via Starship), bat, delta, eza, lazygit, fastfetch, tmux, and your terminal emulator (Ghostty/Kitty/Alacritty).

For bash specifically, slate adds a single marker-block to your \~/.bashrc(or \~/.bash_profile on macOS) that sources a managed env file. `slate clean` removes that block cleanly — no orphaned exports, no leftover state.

`cargo install slate-cli` or `brew install MoonMao42/homebrew-tap/slate-cli`

Repo: https://github.com/MoonMao42/slate

Happy to hear feedback on the shell integration — especially the marker-block approach vs alternatives like a standalone rc snippet file.

https://redd.it/1so42dt

@r_bash

GitHub

GitHub - MoonMao42/slate: One-command terminal setup for macOS and Linux — themes, prompts, fonts, and tools all in sync.

One-command terminal setup for macOS and Linux — themes, prompts, fonts, and tools all in sync. - MoonMao42/slate

Automate MySQL Backups to S3 with a Pro-Grade Script (And Never Lose Data Again)

https://wgetskills.substack.com/p/automate-mysql-backups-to-s3-with

https://redd.it/1soi17i

@r_bash

https://wgetskills.substack.com/p/automate-mysql-backups-to-s3-with

https://redd.it/1soi17i

@r_bash

Substack

Automate MySQL Backups to S3 with a Pro-Grade Script (And Never Lose Data Again)

This shell script (mysql_backup.sh) automates MySQL database backups, keeps a rotating set of backups (newest 7 days intended), compresses them, uploads them to Amazon S3, and sends a notification email.#!/bin/sh # mysql_backup.sh: Backup MySQL databases…

Unable to divide string into array

~~~

#!/bin/bash

cd /System/Applications

files=$(ls -a)

IFS=' ' read -ra fileArray <<< $files

#I=0

#while $I -le ${#fileArray[@} ]; do

#echo ${fileArrayI}

#I=$((I++))

#done

#for I in ${fileArray*}; do

#echo $I

#done

#echo $files

~~~

I wrote code to get all of the files in a directory and then put each file into an array. However, when I try to print each element in the array, it only prints the first one. What am I doing wrong?

(The comments show my previous attempts to fix the problem and/or previous code, review them as needed.)

https://redd.it/1spgrvm

@r_bash

~~~

#!/bin/bash

cd /System/Applications

files=$(ls -a)

IFS=' ' read -ra fileArray <<< $files

#I=0

#while $I -le ${#fileArray[@} ]; do

#echo ${fileArrayI}

#I=$((I++))

#done

#for I in ${fileArray*}; do

#echo $I

#done

#echo $files

~~~

I wrote code to get all of the files in a directory and then put each file into an array. However, when I try to print each element in the array, it only prints the first one. What am I doing wrong?

(The comments show my previous attempts to fix the problem and/or previous code, review them as needed.)

https://redd.it/1spgrvm

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community

If everything works, the script below runs without issues. If something fails, it leaves docker containers running in the background? How do I send an email with the error message if something goes wrong and shut the containers down?

# Context

- AWS RDS is running PostgreSQL 18.1

- EC2 doesnt let you install the latest postgres since the package manager hasnt made it available yet

- So running a docker container for postgres 18.1

- It needs to copy a script file called docker_pg_dump.sh to the container

- This script will run a pg_dump and generate a .tar.gz.br file

- This file is transferred from container back to ec2 instance

- This file needs to be uploaded to AWS S3

- Email is sent and then the file is deleted from ec2

- Script above works if everything works well

- If any of the steps go wrong like container already exists or there was trouble connecting, it leaves docker containers dangling and I am not getting any emails with the actual error message inside

- how do I achieve getting the actual error message and the step that blew up inside email while shutting the containers down if something goes wrong

```

#!/usr/bin/env bash

# This script runs on AWS EC2

# It's job is to orchestrate perform a pg_dump inside a docker container corresponding to PostgreSQL version used by AWS RDS database

# The package manager on EC2 might not have the same version of PostgreSQL as AWS RDS available as an installable package

# The pg_dump command will only work if the pg_dump executable used is the same version as the database major version used by AWS RDS

# The pg_dump executable from the docker container is used to perform a dump of the AWS RDS database

# Run a container for PostgreSQL with the same version as the one used by AWS RDS

# Install brotli and tar inside this container

# Copy .pgpass file from AWS EC2 to the container

# Copy or download the AWS RDS certificate needed by PostgreSQL server

# Run the docker_dump.sh script with arguments required if any

# Copy the generated dump file back to AWS EC2 from the container

# Stop and remove the container

# We only require the error message in order send an email

# We need data from the stderr stream but want nothing from the stdout stream

# For error message capture, refer to this answer https://unix.stackexchange.com/a/499443/290371

script_directory=$(cd -- "$(dirname -- "${BASH_SOURCE[0]}")" &>/dev/null && pwd)

cd "${script_directory}" || exit 1

# shellcheck source=/dev/null

source "${script_directory}/.env"

function log_info() {

local -r message="$1"

printf "INFO: %s\n" "${message}"

}

function log_error() {

local -r message="$1"

printf "ERROR: %s\n" "${message}"

}

function cleanup() {

local exit_code="$1"

log_info "cleanup function was called ${exit_code}"

}

function copy_files_from_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: copy_files_from_container <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if ! docker cp \

"${from_path}" \

"${to_path}"; then

return 1

fi

}

function copy_files_to_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: copy_files_to_container <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if ! docker cp \

"${from_path}" \

"${to_path}"; then

return 1

fi

}

function fetch_table_row_counts() {

if [[ -z

# Context

- AWS RDS is running PostgreSQL 18.1

- EC2 doesnt let you install the latest postgres since the package manager hasnt made it available yet

- So running a docker container for postgres 18.1

- It needs to copy a script file called docker_pg_dump.sh to the container

- This script will run a pg_dump and generate a .tar.gz.br file

- This file is transferred from container back to ec2 instance

- This file needs to be uploaded to AWS S3

- Email is sent and then the file is deleted from ec2

- Script above works if everything works well

- If any of the steps go wrong like container already exists or there was trouble connecting, it leaves docker containers dangling and I am not getting any emails with the actual error message inside

- how do I achieve getting the actual error message and the step that blew up inside email while shutting the containers down if something goes wrong

```

#!/usr/bin/env bash

# This script runs on AWS EC2

# It's job is to orchestrate perform a pg_dump inside a docker container corresponding to PostgreSQL version used by AWS RDS database

# The package manager on EC2 might not have the same version of PostgreSQL as AWS RDS available as an installable package

# The pg_dump command will only work if the pg_dump executable used is the same version as the database major version used by AWS RDS

# The pg_dump executable from the docker container is used to perform a dump of the AWS RDS database

# Run a container for PostgreSQL with the same version as the one used by AWS RDS

# Install brotli and tar inside this container

# Copy .pgpass file from AWS EC2 to the container

# Copy or download the AWS RDS certificate needed by PostgreSQL server

# Run the docker_dump.sh script with arguments required if any

# Copy the generated dump file back to AWS EC2 from the container

# Stop and remove the container

# We only require the error message in order send an email

# We need data from the stderr stream but want nothing from the stdout stream

# For error message capture, refer to this answer https://unix.stackexchange.com/a/499443/290371

script_directory=$(cd -- "$(dirname -- "${BASH_SOURCE[0]}")" &>/dev/null && pwd)

cd "${script_directory}" || exit 1

# shellcheck source=/dev/null

source "${script_directory}/.env"

function log_info() {

local -r message="$1"

printf "INFO: %s\n" "${message}"

}

function log_error() {

local -r message="$1"

printf "ERROR: %s\n" "${message}"

}

function cleanup() {

local exit_code="$1"

log_info "cleanup function was called ${exit_code}"

}

function copy_files_from_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: copy_files_from_container <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if ! docker cp \

"${from_path}" \

"${to_path}"; then

return 1

fi

}

function copy_files_to_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: copy_files_to_container <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if ! docker cp \

"${from_path}" \

"${to_path}"; then

return 1

fi

}

function fetch_table_row_counts() {

if [[ -z

Unix & Linux Stack Exchange

How to capture error message from executed command?

I was tasked to create an automated server hardening script and one thing that they need is a report of all the output of each command executed. I want to store the error message inside a string and

"$(command -v psql)" ]]; then

log_error "The psql command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 4 ]]; then

log_error "Usage: fetch_table_row_counts <dbname> <host> <port> <username>"

return 1

fi

local -r dbname="$1"

local -r host="$2"

local -r port="$3"

local -r username="$4"

shift 4

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

local -r query="

SELECT

json_agg(row)

FROM

(

WITH tbl AS

(

SELECT

table_schema,

table_name

FROM

information_schema.tables

WHERE

table_name NOT LIKE 'pg_%'

AND table_schema IN

(

'public'

)

)

SELECT

table_name,

(

xpath('/row/c/text()', query_to_xml(format('select count(*) as c from %I.%I', table_schema, table_name), FALSE, TRUE, ''))

)

[1]::text::INT AS rows_n

FROM

tbl

ORDER BY

table_name ASC

) row;

"

if result=$(psql \

"--dbname=${dbname}" \

"--host=${host}" \

"--port=${port}" \

"--username=${username}" \

"--command=${query}" \

"--no-align" \

"--tuples-only"); then

printf "%s\n" "${result}"

return 0

else

return 1

fi

}

function install_dependencies() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: install_dependencies <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

local -r command="apt-get update -qq --yes && apt-get install --no-install-recommends -qq --yes brotli tar && rm -rf /var/lib/apt/lists/*"

if ! docker container exec "${container_name}" /bin/bash -c "${command}"; then

return 1

fi

}

function prepare_template_data() {

if [[ -z "$(command -v jq)" ]]; then

log_error "The jq command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 3 ]]; then

log_error "Usage: prepare_template_data <key> <value> <template>"

return 1

fi

local -r key="$1"

local -r value="$2"

local -r template="$3"

shift 3

if [[ -z "${key}" ]]; then

log_error "No value was supplied for key:${key}"

return 1

fi

if [[ -z "${value}" ]]; then

log_error "No value was supplied for value:${value}"

return 1

fi

if [[ -z "${template}" ]]; then

log_error "No value was supplied for template:${template}"

return 1

fi

if ! jq --null-input --argjson "${key}" "${value}" "${template}"; then

return 1

fi

}

function remove_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: remove_container <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if ! docker container rm "${container_name}"; then

return 1

fi

}

function run_script_inside_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 7 ]]; then

log_error "Usage: run_script_inside_container <container_name> <dump_file_name> <dbname> <host> <password> <port> <username>"

return 1

fi

local -r container_name="$1"

local -r dump_file_name="$2"

local -r dbname="$3"

local -r host="$4"

local -r password="$5"

local -r port="$6"

local -r username="$7"

shift 7

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if [[ -z

log_error "The psql command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 4 ]]; then

log_error "Usage: fetch_table_row_counts <dbname> <host> <port> <username>"

return 1

fi

local -r dbname="$1"

local -r host="$2"

local -r port="$3"

local -r username="$4"

shift 4

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

local -r query="

SELECT

json_agg(row)

FROM

(

WITH tbl AS

(

SELECT

table_schema,

table_name

FROM

information_schema.tables

WHERE

table_name NOT LIKE 'pg_%'

AND table_schema IN

(

'public'

)

)

SELECT

table_name,

(

xpath('/row/c/text()', query_to_xml(format('select count(*) as c from %I.%I', table_schema, table_name), FALSE, TRUE, ''))

)

[1]::text::INT AS rows_n

FROM

tbl

ORDER BY

table_name ASC

) row;

"

if result=$(psql \

"--dbname=${dbname}" \

"--host=${host}" \

"--port=${port}" \

"--username=${username}" \

"--command=${query}" \

"--no-align" \

"--tuples-only"); then

printf "%s\n" "${result}"

return 0

else

return 1

fi

}

function install_dependencies() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: install_dependencies <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

local -r command="apt-get update -qq --yes && apt-get install --no-install-recommends -qq --yes brotli tar && rm -rf /var/lib/apt/lists/*"

if ! docker container exec "${container_name}" /bin/bash -c "${command}"; then

return 1

fi

}

function prepare_template_data() {

if [[ -z "$(command -v jq)" ]]; then

log_error "The jq command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 3 ]]; then

log_error "Usage: prepare_template_data <key> <value> <template>"

return 1

fi

local -r key="$1"

local -r value="$2"

local -r template="$3"

shift 3

if [[ -z "${key}" ]]; then

log_error "No value was supplied for key:${key}"

return 1

fi

if [[ -z "${value}" ]]; then

log_error "No value was supplied for value:${value}"

return 1

fi

if [[ -z "${template}" ]]; then

log_error "No value was supplied for template:${template}"

return 1

fi

if ! jq --null-input --argjson "${key}" "${value}" "${template}"; then

return 1

fi

}

function remove_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: remove_container <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if ! docker container rm "${container_name}"; then

return 1

fi

}

function run_script_inside_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 7 ]]; then

log_error "Usage: run_script_inside_container <container_name> <dump_file_name> <dbname> <host> <password> <port> <username>"

return 1

fi

local -r container_name="$1"

local -r dump_file_name="$2"

local -r dbname="$3"

local -r host="$4"

local -r password="$5"

local -r port="$6"

local -r username="$7"

shift 7

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if [[ -z

"${dump_file_name}" ]]; then

log_error "No value was supplied for dump_file_name:${dump_file_name}"

return 1

fi

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${password}" ]]; then

log_error "No value was supplied for password:${password}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

if ! docker container exec "${container_name}" /bin/bash -c "/root/docker_pg_dump.sh ${dump_file_name} ${dbname} ${host} ${password} ${port} ${username}"; then

return 1

fi

}

function save_files_to_s3() {

if [[ -z "$(command -v aws)" ]]; then

log_error "The aws command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: save_files_to_s3 <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if result="$(aws s3 cp "${from_path}" "${to_path}")"; then

return 0

else

log_error "AWS S3 cp execution failed"

return 1

fi

}

function send_email() {

if [[ -z "$(command -v aws)" ]]; then

log_error "The aws command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 4 ]]; then

log_error "Usage: send_email <from_email> <to_email> <template_data> <template>"

return 1

fi

local -r from_email="$1"

local -r to_email="$2"

local -r template_data="$3"

local -r template="$4"

shift 4

if [[ -z "${from_email}" ]]; then

log_error "No value was supplied for from_email:${from_email}"

return 1

fi

if [[ -z "${to_email}" ]]; then

log_error "No value was supplied for to_email:${to_email}"

return 1

fi

if [[ -z "${template_data}" ]]; then

log_error "No value was supplied for template_data:${template_data}"

return 1

fi

if [[ -z "${template}" ]]; then

log_error "No value was supplied for template:${template}"

return 1

fi

if ! aws ses send-templated-email \

--destination "ToAddresses=${to_email}" \

--source "${from_email}" \

--template "${template}" \

--template-data "${template_data}"; then

return 1

fi

}

function start_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 6 ]]; then

log_error "Usage: start_container <container_name> <dbname> <host> <password> <port> <username>"

return 1

fi

local -r container_name="$1"

local -r dbname="$2"

local -r host="$3"

local -r password="$4"

local -r port="$5"

local -r username="$6"

shift 6

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${password}" ]]; then

log_error "No value was supplied for password:${password}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

# Don't use the --env-file option here because it doesn't work with single and double quotes inside .env files when used with docker

# PGPASSWORD is required by the psql command whereas POSTGRES_PASSWORD is required by docker

# Include both environment variables else the command below will not work

if ! docker container run \

--detach \

--env "POSTGRES_DB=${dbname}" \

--env "POSTGRES_HOST=${host}" \

--env "POSTGRES_PASSWORD=${password}"

log_error "No value was supplied for dump_file_name:${dump_file_name}"

return 1

fi

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${password}" ]]; then

log_error "No value was supplied for password:${password}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

if ! docker container exec "${container_name}" /bin/bash -c "/root/docker_pg_dump.sh ${dump_file_name} ${dbname} ${host} ${password} ${port} ${username}"; then

return 1

fi

}

function save_files_to_s3() {

if [[ -z "$(command -v aws)" ]]; then

log_error "The aws command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 2 ]]; then

log_error "Usage: save_files_to_s3 <from_path> <to_path>"

return 1

fi

local -r from_path="$1"

local -r to_path="$2"

shift 2

if [[ -z "${from_path}" ]]; then

log_error "No value was supplied for from_path:${from_path}"

return 1

fi

if [[ -z "${to_path}" ]]; then

log_error "No value was supplied for to_path:${to_path}"

return 1

fi

if result="$(aws s3 cp "${from_path}" "${to_path}")"; then

return 0

else

log_error "AWS S3 cp execution failed"

return 1

fi

}

function send_email() {

if [[ -z "$(command -v aws)" ]]; then

log_error "The aws command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 4 ]]; then

log_error "Usage: send_email <from_email> <to_email> <template_data> <template>"

return 1

fi

local -r from_email="$1"

local -r to_email="$2"

local -r template_data="$3"

local -r template="$4"

shift 4

if [[ -z "${from_email}" ]]; then

log_error "No value was supplied for from_email:${from_email}"

return 1

fi

if [[ -z "${to_email}" ]]; then

log_error "No value was supplied for to_email:${to_email}"

return 1

fi

if [[ -z "${template_data}" ]]; then

log_error "No value was supplied for template_data:${template_data}"

return 1

fi

if [[ -z "${template}" ]]; then

log_error "No value was supplied for template:${template}"

return 1

fi

if ! aws ses send-templated-email \

--destination "ToAddresses=${to_email}" \

--source "${from_email}" \

--template "${template}" \

--template-data "${template_data}"; then

return 1

fi

}

function start_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 6 ]]; then

log_error "Usage: start_container <container_name> <dbname> <host> <password> <port> <username>"

return 1

fi

local -r container_name="$1"

local -r dbname="$2"

local -r host="$3"

local -r password="$4"

local -r port="$5"

local -r username="$6"

shift 6

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if [[ -z "${dbname}" ]]; then

log_error "No value was supplied for dbname:${dbname}"

return 1

fi

if [[ -z "${host}" ]]; then

log_error "No value was supplied for host:${host}"

return 1

fi

if [[ -z "${password}" ]]; then

log_error "No value was supplied for password:${password}"

return 1

fi

if [[ -z "${port}" ]]; then

log_error "No value was supplied for port:${port}"

return 1

fi

if [[ -z "${username}" ]]; then

log_error "No value was supplied for username:${username}"

return 1

fi

# Don't use the --env-file option here because it doesn't work with single and double quotes inside .env files when used with docker

# PGPASSWORD is required by the psql command whereas POSTGRES_PASSWORD is required by docker

# Include both environment variables else the command below will not work

if ! docker container run \

--detach \

--env "POSTGRES_DB=${dbname}" \

--env "POSTGRES_HOST=${host}" \

--env "POSTGRES_PASSWORD=${password}"

\

--env "PGPASSWORD=${password}" \

--env "POSTGRES_PORT=${port}" \

--env "POSTGRES_USER=${username}" \

--name "${container_name}" \

--publish "${port}:${port}" \

"postgres:18.1-trixie"; then

return 1

fi

}

function stop_and_remove_container() {

local container_name="$1"

if ! stop_container "${container_name}"; then

return 1

fi

if ! remove_container "${container_name}"; then

return 1

fi

}

function stop_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: stop_container <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if ! docker container stop "${container_name}"; then

return 1

fi

}

function main() {

trap 'cleanup $LINENO' EXIT

local -r POSTGRES_DB="${POSTGRES_DB}"

local -r POSTGRES_HOST="${POSTGRES_HOST}"

local -r POSTGRES_PASSWORD="${POSTGRES_PASSWORD}"

local -r POSTGRES_PORT="${POSTGRES_PORT}"

local -r POSTGRES_USER="${POSTGRES_USER}"

local -r bucket_url="s3://some-bucket"

local -r container_name="pg_dumper"

local -r date_str="$(date +%d_%m_%y_%HH_%MM_%SS_%Z)"

local -r email_template_success="template-success"

local -r file_name_prefix="${POSTGRES_DB}"

local -r file_name_suffix="${date_str}"

local -r from_email="[email protected]"

local -r to_email="[email protected]"

local -r dump_file_name="${file_name_prefix}_${file_name_suffix}"

local email_template_data

local rows

if ! start_container \

"${container_name}" \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PASSWORD}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"; then

return 1

fi

if ! install_dependencies \

"${container_name}"; then

return 1

fi

if ! copy_files_to_container \

"${script_directory}/docker_pg_dump.sh" \

"${container_name}:/root"; then

return 1

fi

if ! run_script_inside_container \

"${container_name}" \

"${dump_file_name}" \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PASSWORD}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"; then

return 1

fi

if ! copy_files_from_container \

"${container_name}:/tmp/${dump_file_name}.tar.gz.br" \

"/tmp"; then

return 1

fi

if ! stop_and_remove_container \

"${container_name}"; then

return 1

fi

if rows=$(fetch_table_row_counts \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"); then

log_info "We got the rows: ${rows}"

else

return 1

fi

# Handle the case where nothing is returned because you ran it on an empty database with no tables

if [[ -z "${rows}" ]]; then

rows="[]"

fi

# shellcheck disable=SC2016

if email_template_data=$(prepare_template_data "tables" "${rows}" '{tables:$tables}'); then

log_info "We got the email template data: ${email_template_data}"

else

return 1

fi

if ! save_files_to_s3 \

"/tmp/${dump_file_name}.tar.gz.br" \

"${bucket_url}"; then

return 1

fi

if ! send_email \

"${from_email}" \

"${to_email}" \

"${email_template_data}" \

"${email_template_success}"; then

return 1

fi

# The backup file has been saved to S3 and we no longer need it locally

rm -f "/tmp/${dump_file_name}.tar.gz.br"

}

main "$@"

```

https://redd.it/1spjark

@r_bash

--env "PGPASSWORD=${password}" \

--env "POSTGRES_PORT=${port}" \

--env "POSTGRES_USER=${username}" \

--name "${container_name}" \

--publish "${port}:${port}" \

"postgres:18.1-trixie"; then

return 1

fi

}

function stop_and_remove_container() {

local container_name="$1"

if ! stop_container "${container_name}"; then

return 1

fi

if ! remove_container "${container_name}"; then

return 1

fi

}

function stop_container() {

if [[ -z "$(command -v docker)" ]]; then

log_error "The docker command was not found, check if it is installed"

return 1

fi

if [[ "$#" -lt 1 ]]; then

log_error "Usage: stop_container <container_name>"

return 1

fi

local -r container_name="$1"

shift 1

if [[ -z "${container_name}" ]]; then

log_error "No value was supplied for container_name:${container_name}"

return 1

fi

if ! docker container stop "${container_name}"; then

return 1

fi

}

function main() {

trap 'cleanup $LINENO' EXIT

local -r POSTGRES_DB="${POSTGRES_DB}"

local -r POSTGRES_HOST="${POSTGRES_HOST}"

local -r POSTGRES_PASSWORD="${POSTGRES_PASSWORD}"

local -r POSTGRES_PORT="${POSTGRES_PORT}"

local -r POSTGRES_USER="${POSTGRES_USER}"

local -r bucket_url="s3://some-bucket"

local -r container_name="pg_dumper"

local -r date_str="$(date +%d_%m_%y_%HH_%MM_%SS_%Z)"

local -r email_template_success="template-success"

local -r file_name_prefix="${POSTGRES_DB}"

local -r file_name_suffix="${date_str}"

local -r from_email="[email protected]"

local -r to_email="[email protected]"

local -r dump_file_name="${file_name_prefix}_${file_name_suffix}"

local email_template_data

local rows

if ! start_container \

"${container_name}" \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PASSWORD}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"; then

return 1

fi

if ! install_dependencies \

"${container_name}"; then

return 1

fi

if ! copy_files_to_container \

"${script_directory}/docker_pg_dump.sh" \

"${container_name}:/root"; then

return 1

fi

if ! run_script_inside_container \

"${container_name}" \

"${dump_file_name}" \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PASSWORD}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"; then

return 1

fi

if ! copy_files_from_container \

"${container_name}:/tmp/${dump_file_name}.tar.gz.br" \

"/tmp"; then

return 1

fi

if ! stop_and_remove_container \

"${container_name}"; then

return 1

fi

if rows=$(fetch_table_row_counts \

"${POSTGRES_DB}" \

"${POSTGRES_HOST}" \

"${POSTGRES_PORT}" \

"${POSTGRES_USER}"); then

log_info "We got the rows: ${rows}"

else

return 1

fi

# Handle the case where nothing is returned because you ran it on an empty database with no tables

if [[ -z "${rows}" ]]; then

rows="[]"

fi

# shellcheck disable=SC2016

if email_template_data=$(prepare_template_data "tables" "${rows}" '{tables:$tables}'); then

log_info "We got the email template data: ${email_template_data}"

else

return 1

fi

if ! save_files_to_s3 \

"/tmp/${dump_file_name}.tar.gz.br" \

"${bucket_url}"; then

return 1

fi

if ! send_email \

"${from_email}" \

"${to_email}" \

"${email_template_data}" \

"${email_template_success}"; then

return 1

fi

# The backup file has been saved to S3 and we no longer need it locally

rm -f "/tmp/${dump_file_name}.tar.gz.br"

}

main "$@"

```

https://redd.it/1spjark

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community

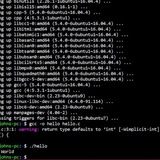

Showcase Termyt: A professional, pure Bash CLI wrapper (with .deb packaging & CI/CD)

Hi everyone!

I wanted to share a project I’ve been developing called Termyt.

Unlike heavy terminal emulators, Termyt is a pure Bash script designed to be a fast, efficient CLI wrapper. It’s built for those who need a streamlined way to manage their workflows without the overhead of a full GUI application.

# Why Termyt?

Pure Bash: No heavy dependencies, no bloat. Just fast, native execution.

Portable: Since it's a shell script, it's highly adaptable across different environments.

Debianized: I’ve packaged it into a professional `.deb` file, following all the standard Linux filesystem hierarchies (`/usr/bin`, `/usr/share/doc`, etc.).

Security & Transparency: The code is open, readable, and easy to audit. You can find the source code (

# Recent Improvements:

CI/CD Pipeline: Even for a Bash script, I’ve implemented GitHub Actions to automate the packaging and ensure everything is reproducible.

Professional Layout: Cleaned up the repo to meet professional standards, including man pages support and proper script documentation.

Badges & Documentation: Added real-time badges to track the build status and versioning.

# Link:

GitHub Repository: [https://github.com/Rob1c/termyt\]

Lemme know your thoughts!

https://redd.it/1spyn3v

@r_bash

Hi everyone!

I wanted to share a project I’ve been developing called Termyt.

Unlike heavy terminal emulators, Termyt is a pure Bash script designed to be a fast, efficient CLI wrapper. It’s built for those who need a streamlined way to manage their workflows without the overhead of a full GUI application.

# Why Termyt?

Pure Bash: No heavy dependencies, no bloat. Just fast, native execution.

Portable: Since it's a shell script, it's highly adaptable across different environments.

Debianized: I’ve packaged it into a professional `.deb` file, following all the standard Linux filesystem hierarchies (`/usr/bin`, `/usr/share/doc`, etc.).

Security & Transparency: The code is open, readable, and easy to audit. You can find the source code (

.sh file) on the main branch, inside usr/bin, or packed in CI/CD (Github Action) safe generated tarballs and ZIPs in the release.# Recent Improvements:

CI/CD Pipeline: Even for a Bash script, I’ve implemented GitHub Actions to automate the packaging and ensure everything is reproducible.

Professional Layout: Cleaned up the repo to meet professional standards, including man pages support and proper script documentation.

Badges & Documentation: Added real-time badges to track the build status and versioning.

# Link:

GitHub Repository: [https://github.com/Rob1c/termyt\]

Lemme know your thoughts!

https://redd.it/1spyn3v

@r_bash

GitHub

GitHub - Rob1c/Termyt: Termyt is an easy-to-use CLI Tool for downloading audio and videos from streaming sites like YouTube, yt…

Termyt is an easy-to-use CLI Tool for downloading audio and videos from streaming sites like YouTube, yt-dlp based. (Protected by CC-BY-NC License 4.0) - Rob1c/Termyt

What is the difference between have 2 separate ERR and EXIT traps vs a single EXIT trap for handling everything?

I have a function called testcommand that looks like this

```

function testcommand() {

local -r command="$1"

if eval "${command}"; then

printf "%s\n" "INFO: the command completed its execution successfully"

return 0

else

printf "%s\n" "ERROR: the command failed to execute"

return 1

fi

}

function main() {

trap 'handleexit $?' EXIT

local command="$1"

case "${command}" in

"ls") ;;

*)

command="badcommand"

;;

esac

printf "%s\n" "This is our error log file ${ERRORLOGFILE}"

if ! testcommand "${command}" 2>"${ERRORLOGFILE}"; then

return 1

fi

}

main "$@"

```

In case you are wondering, this is what the handleexit actually looks like

Alternatively I can also make 2 functions and have the main function basically handle ERR and EXIT separately

In which I ll need 2 functions

Quick questions based on the stuff above

- Do I need just the EXIT or do I need both ERR and EXIT

- Is there any tradeoff involved on using one vs two traps like this?

- Where should I remove that log file for success and failure cases?

- Is this a good basic setup to write more complex stuff like calling external commands like psql, aws etc?

- Is there a name for this design pattern in bash?

https://redd.it/1sqqvxg

@r_bash

I have a function called testcommand that looks like this

```

function testcommand() {

local -r command="$1"

if eval "${command}"; then

printf "%s\n" "INFO: the command completed its execution successfully"

return 0

else

printf "%s\n" "ERROR: the command failed to execute"

return 1

fi

}

In this invocation, I am calling a main() function that calls this command with a single trap

function main() {

trap 'handleexit $?' EXIT

local command="$1"

case "${command}" in

"ls") ;;

*)

command="badcommand"

;;

esac

printf "%s\n" "This is our error log file ${ERRORLOGFILE}"

if ! testcommand "${command}" 2>"${ERRORLOGFILE}"; then

return 1

fi

}

main "$@"

```

In case you are wondering, this is what the handleexit actually looks like

#!/usr/bin/env bash

ERROR_LOG_FILE=$(mktemp)

function handle_exit() {

local error_message

local -r exit_code="$1"

error_message="$(cat "${ERROR_LOG_FILE}")"

if [[ -z "${error_message}" ]]; then

printf "handle_exit: INFO: date:%s, exit_code::%s, error:%s\n" "$(date)" "${exit_code}" "No errors were detected"

else

printf "handle_exit: ERROR: date:%s, exit_code::%s, error:%s\n" "$(date)" "${exit_code}" "${error_message}"

fi

if [[ -f "${ERROR_LOG_FILE}" ]]; then

rm -f "${ERROR_LOG_FILE}"

fi

}

Alternatively I can also make 2 functions and have the main function basically handle ERR and EXIT separately

function main() {

trap 'handle_error' ERR

trap 'handle_exit $?' EXIT

local command="$1"

case "${command}" in

"ls") ;;

*)

command="bad_command"

;;

esac

printf "%s\n" "This is our error log file ${ERROR_LOG_FILE}"

if ! test_command "${command}" 2>"${ERROR_LOG_FILE}"; then

return 1

fi

}

main "$@"

In which I ll need 2 functions

#!/usr/bin/env bash

ERROR_LOG_FILE=$(mktemp)

function handle_error() {

local arg="$1"

printf "%s\n" "handle_error called at date:$(date) ${arg}"

if [[ -f "${ERROR_LOG_FILE}" ]]; then

rm -f "${ERROR_LOG_FILE}"

fi

}

function handle_exit() {

local arg="$1"

printf "%s\n" "handle_exit called at date:$(date) ${arg}"

if [[ -f "${ERROR_LOG_FILE}" ]]; then

rm -f "${ERROR_LOG_FILE}"

fi

}

Quick questions based on the stuff above

- Do I need just the EXIT or do I need both ERR and EXIT

- Is there any tradeoff involved on using one vs two traps like this?

- Where should I remove that log file for success and failure cases?

- Is this a good basic setup to write more complex stuff like calling external commands like psql, aws etc?

- Is there a name for this design pattern in bash?

https://redd.it/1sqqvxg

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community

I made my own sandboxed bash

I mainly built this for agents. Bash is one of their main tools but was designed for humans.

For example, we assume that getting no information after a mutating command means success. But for an agent, that silence has no particular meaning. If an agent runs

The idea is to give instant feedback for every command:

> mkdir hello

# Stdout: Folder has been created ✔

with additional information like

The other big problem is safety. For the majority of commands we can control the input and output, but it becomes problematic with commands like

I built it in TypeScript, so if there are any fellow bash and TypeScript lovers out there, I'd love to hear your thought. And if you ever feel like adding a command or two, that would be awesome too.

Here the repository: https://github.com/capsulerun/bash

https://redd.it/1srs68i

@r_bash

I mainly built this for agents. Bash is one of their main tools but was designed for humans.

For example, we assume that getting no information after a mutating command means success. But for an agent, that silence has no particular meaning. If an agent runs

mv file1 file2, it has no idea if the command worked, so it will immediately do an ls just to check.The idea is to give instant feedback for every command:

> mkdir hello

# Stdout: Folder has been created ✔

with additional information like

exit code, stderr, and a full diff of what changed (created, modified, deleted).The other big problem is safety. For the majority of commands we can control the input and output, but it becomes problematic with commands like

python3 -c or node -e that let an agent run untrusted code. That's why this bash is sandboxed by default using wasm to keep the host system safe.I built it in TypeScript, so if there are any fellow bash and TypeScript lovers out there, I'd love to hear your thought. And if you ever feel like adding a command or two, that would be awesome too.

Here the repository: https://github.com/capsulerun/bash

https://redd.it/1srs68i

@r_bash

GitHub

GitHub - capsulerun/bash: Sandboxed bash made for Agents

Sandboxed bash made for Agents. Contribute to capsulerun/bash development by creating an account on GitHub.

How to launch a program in bash.

hello I'm looking to launch a C program in bash, I launch the usual program as its 'sudo./p' so if I see a stcript bash that launches in my place what will it give? I tried its #!/bin/bash sudo./p

https://redd.it/1ss1p31

@r_bash

hello I'm looking to launch a C program in bash, I launch the usual program as its 'sudo./p' so if I see a stcript bash that launches in my place what will it give? I tried its #!/bin/bash sudo./p

https://redd.it/1ss1p31

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community

recommendations for books to learn bash scripting from scratch

I’m somewhat of a beginner to bash scripting. I’m somewhat familiar with common shell command and how to navigate a linux CLI as well as some very basic programming concepts.

However by all means i am looking for a way to learn bash scripting from zero. looking for a good book or web resource to follow along and get a baseline knowledge of scripting in bash.

I’m not a fan of AI and I want to be able to write my own scripts for automating tasks like connecting to my openVPN, setting up crons for daily backups, file organization and management, etc.

https://redd.it/1ss65hf

@r_bash

I’m somewhat of a beginner to bash scripting. I’m somewhat familiar with common shell command and how to navigate a linux CLI as well as some very basic programming concepts.

However by all means i am looking for a way to learn bash scripting from zero. looking for a good book or web resource to follow along and get a baseline knowledge of scripting in bash.

I’m not a fan of AI and I want to be able to write my own scripts for automating tasks like connecting to my openVPN, setting up crons for daily backups, file organization and management, etc.

https://redd.it/1ss65hf

@r_bash

Reddit

From the bash community on Reddit

Explore this post and more from the bash community