Soft Contamination Means Benchmarks Test Shallow Generalization https://arxiv.org/abs/2602.12413

arXiv.org

Soft Contamination Means Benchmarks Test Shallow Generalization

If LLM training data is polluted with benchmark test data, then benchmark performance gives biased estimates of out-of-distribution (OOD) generalization. Typical decontamination filters use n-gram...

Data Repetition Beats Data Scaling in Long-CoT Supervised Fine-Tuning https://arxiv.org/abs/2602.11149

arXiv.org

Data Repetition Beats Data Scaling in Long-CoT Supervised Fine-Tuning

Supervised fine-tuning (SFT) on chain-of-thought data is an essential post-training step for reasoning language models. Standard machine learning intuition suggests that training with more unique...

rePIRL: Learn PRM with Inverse RL for LLM Reasoning https://arxiv.org/abs/2602.07832

arXiv.org

rePIRL: Learn PRM with Inverse RL for LLM Reasoning

Process rewards have been widely used in deep reinforcement learning to improve training efficiency, reduce variance, and prevent reward hacking. In LLM reasoning, existing works also explore...

❤3

Tensor Decomposition for Non-Clifford Gate Minimization https://arxiv.org/abs/2602.15285

arXiv.org

Tensor Decomposition for Non-Clifford Gate Minimization

Fault-tolerant quantum computation requires minimizing non-Clifford gates, whose implementation via magic state distillation dominates the resource costs. While $T$-count minimization is...

👍3😈1

BRIDGE: Predicting Human Task Completion Time From Model Performance https://arxiv.org/abs/2602.07267

arXiv.org

BRIDGE: Predicting Human Task Completion Time From Model Performance

Evaluating the real-world capabilities of AI systems requires grounding benchmark performance in human-interpretable measures of task difficulty. Existing approaches that rely on direct human task...

😁1

Forwarded from Love. Death. Transformers.

Если вы готовитесь к собесу в норм место вам будет полезно почитать

https://djdumpling.github.io/2026/01/31/frontier_training.html

https://djdumpling.github.io/2026/01/31/frontier_training.html

Alex Wa’s Blog

frontier model training methodologies

How do labs train a frontier, multi-billion parameter model? We look towards seven open-weight frontier models: Hugging Face’s SmolLM3, Prime Intellect’s Intellect 3, Nous Research’s Hermes 4, OpenAI’s gpt-oss-120b, Moonshot’s Kimi K2, DeepSeek’s DeepSeek…

👍4🔥4

Forwarded from Градиент обреченный (Sergei Averkiev)

🔺 hf-mem

Утилита, показывающая сколько нужно памяти для запуска модели с HF, кол-во её параметров и заодно их разбивку. Качает только метадату, по ней и считает.

(uvx тут запускает hf-mem без установки в систему)

Есть флаг --experimental (работает для ForCausalLM и ForConditionalGeneration классов), с ним считает размер KV cache'а, необходимого для инференса с заданными max-length и batch-size.

👉 https://github.com/alvarobartt/hf-mem

Утилита, показывающая сколько нужно памяти для запуска модели с HF, кол-во её параметров и заодно их разбивку. Качает только метадату, по ней и считает.

uvx hf-mem --model-id Qwen/Qwen-Image

(uvx тут запускает hf-mem без установки в систему)

Есть флаг --experimental (работает для ForCausalLM и ForConditionalGeneration классов), с ним считает размер KV cache'а, необходимого для инференса с заданными max-length и batch-size.

👉 https://github.com/alvarobartt/hf-mem

👍12🔥5💩1

Benchmarks Saturate When The Model Gets Smarter Than The Judge https://arxiv.org/abs/2601.19532

arXiv.org

Benchmarks Saturate When The Model Gets Smarter Than The Judge

Benchmarks are important tools to track progress in the development of Large Language Models (LLMs), yet inaccuracies in datasets and evaluation methods consistently undermine their effectiveness....

SETI@home: Data Acquisition and Front-end Processing

https://iopscience.iop.org/article/10.3847/1538-3881/ade5a7

SETI@home: Data Analysis and Findings https://iopscience.iop.org/article/10.3847/1538-3881/ade5ab

https://iopscience.iop.org/article/10.3847/1538-3881/ade5a7

SETI@home: Data Analysis and Findings https://iopscience.iop.org/article/10.3847/1538-3881/ade5ab

iopscience.iop.org

SETI@home: Data Acquisition and Front-end Processing

SETI@home: Data Acquisition and Front-end Processing* , Korpela, E. J., Anderson, D. P., Cobb, J., Lebofsky, M., Liu, W., Werthimer, D.

🆒1

Forwarded from сладко стянул

Узнал из https://t.iss.one/tropicalgeometry/1095 что оптимальность решётки E8 тоже формализовали!* Интересно почитать блюпринт (и вообще кажется, что это приятный жанр): файл содержит схему доказательства, причем доступную человеку со стороны. Ну и забавен местами (см. картинку)

https://thefundamentaltheor3m.github.io/Sphere-Packing-Lean/blueprint.pdf

https://thefundamentaltheor3m.github.io/Sphere-Packing-Lean/blueprint.pdf

🔥2

You Don't Need to Run Every Eval

https://fixupx.com/DimitrisPapail/status/2026531440414925307

https://fixupx.com/DimitrisPapail/status/2026531440414925307

📰 Article • FixupX

You Don't Need to Run Every Eval

I used Claude Code to build BenchPress a $0 benchmark prediction system, Codex to audit it for bugs, and Claude Sonnet to try to beat it for $1. Here's what I found: LLM evals are so low-rank (in fact

😁11👍2

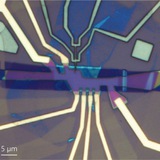

Charge-4e superconductor with parafermionic vortices: A path to universal topological quantum computation https://arxiv.org/abs/2602.06963

arXiv.org

Charge-$4e$ superconductor with parafermionic vortices: A path to...

Topological superconductors (TSCs) provide a promising route to fault-tolerant quantum information processing. However, the canonical Majorana platform based on $2e$ TSCs remains computationally...

☃1

Gradient Regularization Prevents Reward Hacking in Reinforcement Learning from Human Feedback and Verifiable Rewards https://arxiv.org/abs/2602.18037

arXiv.org

Gradient Regularization Prevents Reward Hacking in Reinforcement...

Reinforcement Learning from Human Feedback (RLHF) or Verifiable Rewards (RLVR) are two key steps in the post-training of modern Language Models (LMs). A common problem is reward hacking, where the...

Topological superconductivity with emergent vortex lattice in twisted semiconductors https://arxiv.org/abs/2602.15106

arXiv.org

Topological superconductivity with emergent vortex lattice in...

The coexistence of superconductivity and fractional quantum anomalous Hall (FQAH) effect has recently been observed in twisted MoTe$_2$ and theoretically demonstrated in a model of repulsively...

☃1

H-Neurons: On the Existence, Impact, and Origin of Hallucination-Associated Neurons in LLMs

https://arxiv.org/abs/2512.01797

via @Futuris

https://arxiv.org/abs/2512.01797

via @Futuris

arXiv.org

H-Neurons: On the Existence, Impact, and Origin of...

Large language models (LLMs) frequently generate hallucinations -- plausible but factually incorrect outputs -- undermining their reliability. While prior work has examined hallucinations from...

❤1👍1

Bullshit Benchmark https://github.com/petergpt/bullshit-benchmark

GitHub

GitHub - petergpt/bullshit-benchmark: BullshitBench measures whether AI models challenge nonsensical prompts instead of confidently…

BullshitBench measures whether AI models challenge nonsensical prompts instead of confidently answering them, created by Peter Gostev. - petergpt/bullshit-benchmark

👀3👍1

Scaling Diffusion Language Models via Adaptation from Autoregressive Models https://arxiv.org/abs/2410.17891

arXiv.org

Scaling Diffusion Language Models via Adaptation from Autoregressive Models

Diffusion Language Models (DLMs) have emerged as a promising new paradigm for text generative modeling, potentially addressing limitations of autoregressive (AR) models. However, current DLMs have...

Forwarded from ∅

Bayesians Commit the Gambler's Fallacy

Abstract:

The gambler's fallacy is the tendency to expect random processes to switch more often than they actually do—for example, to assign a higher probability to heads after a streak of tails. It's often taken to be evidence for irrationality. It isn't. Rather, it's to be expected from a group of Bayesians who begin with causal uncertainty, and then observe unbiased data from an (in fact) statistically independent process. Although they increase their confidence that the outcomes are independent, they do so in an asymmetric way—ruling out “streaky” hypotheses more quickly than “switchy” ones. Their expectations depend on this balance of uncertainty; as a result, the majority (and the average) exhibit the gambler's fallacy, expecting a heads after a string of tails. If they have limited memory, this tendency persists even with arbitrarily-large amounts of data. In fact, such Bayesians exhibit a variety of the empirical trends found in studies of the gambler's fallacy. They expect switches after short streaks but continuations after long ones; these nonlinear expectations vary with their familiarity with the causal system; their predictions depend on the sequence they've just seen; they produce sequences that are too switchy; and they exhibit greater rates of the gambler's fallacy in binary predictions than in probability estimates. In short: what's been thought to be evidence for irrationality may instead be rational responses to limited data and memory.

https://onlinelibrary.wiley.com/doi/10.1111/cogs.70171

Abstract:

The gambler's fallacy is the tendency to expect random processes to switch more often than they actually do—for example, to assign a higher probability to heads after a streak of tails. It's often taken to be evidence for irrationality. It isn't. Rather, it's to be expected from a group of Bayesians who begin with causal uncertainty, and then observe unbiased data from an (in fact) statistically independent process. Although they increase their confidence that the outcomes are independent, they do so in an asymmetric way—ruling out “streaky” hypotheses more quickly than “switchy” ones. Their expectations depend on this balance of uncertainty; as a result, the majority (and the average) exhibit the gambler's fallacy, expecting a heads after a string of tails. If they have limited memory, this tendency persists even with arbitrarily-large amounts of data. In fact, such Bayesians exhibit a variety of the empirical trends found in studies of the gambler's fallacy. They expect switches after short streaks but continuations after long ones; these nonlinear expectations vary with their familiarity with the causal system; their predictions depend on the sequence they've just seen; they produce sequences that are too switchy; and they exhibit greater rates of the gambler's fallacy in binary predictions than in probability estimates. In short: what's been thought to be evidence for irrationality may instead be rational responses to limited data and memory.

https://onlinelibrary.wiley.com/doi/10.1111/cogs.70171

Wiley Online Library

Bayesians Commit the Gambler's Fallacy

The gambler's fallacy is the tendency to expect random processes to switch more often than they actually do—for example, to assign a higher probability to heads after a streak of tails. It's often ta...

😭2